Возможно ли «выжать» из сайта на WordPress высокую производительность? Наш ответ — да! В этой статье мы покажем, как добиться устойчивой работы сайта на WordPress при высоких нагрузках, доходящих до 10,000 соединений в секунду, что равно 800 миллионам посещений в сутки.

Прежде всего, нам нужен собственный виртуальный сервер (VPS). Для тестов использовался VPS, арендованный у DigitalOcean за 20 USD в месяц со следующими параметрами: 2GB памяти, 2 процессора, 40GB дискового пространства на SSD. В качестве операционной системы была выбрана CentOS Linux release 7.3.

Дальнейший текст можно рассматривать почти как пошаговую инструкцию для опытных администраторов. Будут приведены только те параметры, которые отличаются от стандартных и увеличивают производительность сервера. Итак, вперед!

Устанавливаем nginx

Сначала создаем репозиторий для yum:

touch /etc/yum.repos.d/nginx.repo

Вставляем в файл следующий текст:

[nginx] name=nginx repo baseurl=http://nginx.org/packages/OS/OSRELEASE/$basearch/ gpgcheck=0 enabled=1

Замените “OS” на “rhel” или “centos”, в зависимости от используемого дистрибутива, и “OSRELEASE” на “5”, “6”, or “7”, для версий 5.x, 6.x, или 7.x, соответственно.

Запускаем процесс установки:

yum -y install nginx

Правим nginx.conf

# Автоматически установить число рабочих процессов, равное числу процессоров

worker_processes auto;

# В секции events

# epoll — эффективный метод обработки соединений

use epoll;

# Рабочему процессу принимать сразу все новые соединения (иначе только одно за другим)

multi_accept on;

# В секции http

# Выключаем логи

error_log /var/log/nginx/error.log crit; # только сообщения о критических ошибках

access_log off;

log_not_found off;

# Отключаем возможность указания порта при редиректе

port_in_redirect off;

# Разрешить больше запросов для keep-alive соединения

keepalive_requests 100;

# Уменьшаем буферы до разумных пределов

# Это позволяет экономить память при большом числе запросов

client_body_buffer_size 10K;

client_header_buffer_size 4k; # на WordPress 2k может не хватать

client_max_body_size 50m;

large_client_header_buffers 2 4k;

# Уменьшаем таймауты

client_body_timeout 10;

client_header_timeout 10;

send_timeout 2;

# Разрешаем сброс соединений по таймауту

reset_timedout_connection on;

# Ускорение работы tcp

tcp_nodelay on;

tcp_nopush on;

# Разрешение использование системной функции Linux - sendfile() для ускорения файловых операций

sendfile on;

# Включаем сжатие данных

gzip on;

gzip_http_version 1.0;

gzip_proxied any;

gzip_min_length 1100;

gzip_types text/plain text/css application/json application/x-javascript text/xml application/xml application/xml+rss text/javascript application/javascript image/svg+xml;

gzip_disable msie6;

gzip_vary on;

# Включаем кэширование открытых файлов

open_file_cache max=10000 inactive=20s;

open_file_cache_valid 30s;

open_file_cache_min_uses 2;

open_file_cache_errors on;

# Устанавливаем Таймаут php

fastcgi_read_timeout 300;

# Подключаем php-fpm по сокету - работает быстрее, чем по tcp

upstream php-fpm {

# Это должно соответствовать директиве "listen" в пуле php-fpm

server unix:/run/php-fpm/www.sock;

}

# Включаем дополнительные файлы конфигураций

include /etc/nginx/conf.d/*.conf;

Создаем файл /etc/nginx/conf.d/servers.conf и записываем туда секции server для сайтов. Пример:

# Rewrite с http

server {

listen 80;

server_name domain.com www.domain.com;

rewrite ^(.*) https://$host$1 permanent;

}

# Обработка https

server {

# включаем http2 - ускоряет обработку (бинарность, мультиплексирование и т.д.)

listen 443 ssl http2;

ssl_certificate /etc/nginx/ssl/domain.pem;

ssl_certificate_key /etc/nginx/ssl/domain.key;

server_name domain.com www.domain.com;

root /var/www/domain;

index index.php;

# Обрабатываем php файлы

location ~ \.php$ {

fastcgi_buffers 8 256k;

fastcgi_buffer_size 128k;

fastcgi_intercept_errors on;

include fastcgi_params;

try_files $uri = 404;

fastcgi_split_path_info ^(.+\.php)(/.+)$;

fastcgi_param SCRIPT_FILENAME $document_root$fastcgi_script_name;

fastcgi_pass unix:/var/run/php-fpm.sock;

fastcgi_index index.php;

}

# Кэшируем всю статику

location ~* ^.+\.(ogg|ogv|svg|svgz|eot|otf|woff|mp4|ttf|rss|atom|jpg|jpeg|gif|png|ico|zip|tgz|gz|rar|bz2|doc|xls|exe|ppt|tar|mid|midi|wav|bmp|rtf)$ {

expires max;

add_header Cache-Control "public";

}

# Кэшируем js и css

location ~* ^.+\.(css|js)$ {

expires 1y;

add_header Cache-Control "max-age=31600000, public";

}

}

Запускаем nginx:

systemctl start nginx systemctl enable nginx

Устанавливаем MySQL

yum install -y https://dev.mysql.com/get/Downloads/MySQL-5.7/mysql-community-server-5.7.18-1.el7.x86_64.rpm yum -y install mysql-community-server

Правим /etc/my.cnf

Ниже группа настроек для сервера с памятью 2ГБ. Для сервера с 1ГБ памяти размеры буферов следует урезать вдвое, но не стоит трогать те параметры, которые имеют значения 64M и ниже. Все параметры тут важны, но важнейшим является «query_cache_type = ON». По умолчанию, кэширование запросов в MySQL выключено! Причина непонятна, возможно, экономия памяти. Но с включением этого параметра работа с базой сильно ускоряется, что сразу же чувствуется на административных страницах сайта WordPress.

max_connections = 64 key_buffer_size = 32M # innodb_buffer_pool_chunk_size по умолчанию 128M innodb_buffer_pool_chunk_size=128M # Когда innodb_buffer_pool_size меньше чем 1024M, # innodb_buffer_pool_instances будет установлен MySQL в 1 innodb_buffer_pool_instances = 1 # innodb_buffer_pool_size должен быть равен ил быть кратен # innodb_buffer_pool_chunk_size * innodb_buffer_pool_instances innodb_buffer_pool_size = 512M innodb_flush_method = O_DIRECT innodb_log_file_size = 512M innodb_flush_log_at_trx_commit = 2 thread_cache_size = 16 query_cache_type = ON query_cache_size = 128M query_cache_limit = 256K query_cache_min_res_unit = 2k max_heap_table_size = 64M tmp_table_size = 64M

Запускаем:

systemctl start mysqld systemctl enable mysqld

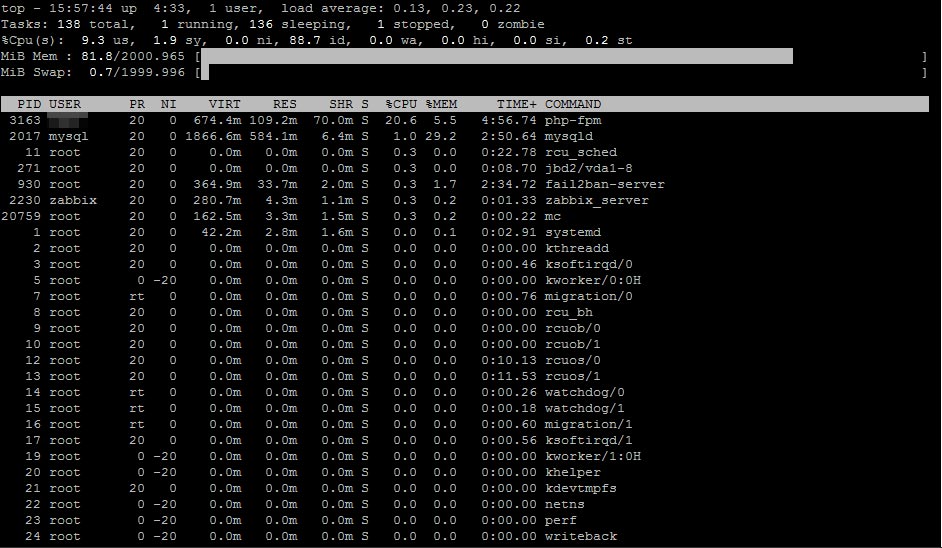

Общий принцип построения высокопроизводительного сайта на WordPresss таков: все должно помещаться примерно в 75% оперативной памяти: nginx, mysql, php-fpm. В консоли в команде top должно быть видно, что свободно 20-25% оперативки и swap не используется. Эта память еще очень пригодится при запуске множества процессов php-fpm во время обработки динамических страниц сайта.

Устанавливаем php

Конечно, нас интересует только 7 версия, которая примерно вдвое быстрее предыдущей — php5. Отличная сборка есть в репозитории webtatic. Кроме того, нужен еще и репозиторий epel.

Устанавливаем:

rpm -Uvh https://dl.fedoraproject.org/pub/epel/epel-release-latest-7.noarch.rpm rpm -Uvh https://mirror.webtatic.com/yum/el7/webtatic-release.rpm yum -y install php72w yum -y install php72w-fpm yum -y install php72w-gd yum -y install php72w-mbstring yum -y install php72w-mysqlnd yum -y install php72w-opcache yum -y install php72w-pear.noarch yum -y install php72w-pecl-imagick yum -y install php72w-pecl-apcu yum -y install php72w-pecl-redis

Обратите внимание на пакет php72w-pecl-apcu, который подключает кэширование данных (APCu), дополняющее кэширование объектов (OpCache), а также на php72w-pecl-redis, который подключает сервер объектного кеширования Redis. WordPress поддерживает APCu. Поддержка Redis доступна с помощью плагина Redis Object Cache.

Правим /etc/php.ini

; Это значение надо увеличить в системах, где PHP открывает много файлов realpath_cache_size = 64M ; Не надо увеличивать стандартный размер памяти для процесса без необходимости ; Большинство WordPress сайтов потребляют порядка 80 МБ memory_limit = 128M ; А вот это стоит уменьшить, для экономии памяти под буферы ; Размер поста редко превышает 64 МБ post_max_size = 64M ; Обычно размер загружаемых файлов не превышает 50 МБ upload_max_filesize = 50M ; Должно выполняться правило: ; upload_max_filesize < post_max_size < memory_limit ; И не забываем о настройках OpCache ; Немедленно убивать процессы, блокирующие кэш opcache.force_restart_timeout = 0 ; Экономить память, сохраняя одинаковые строки только 1 раз для всех процессов php-fpm ; На 2ГБ сервере не получилось поставить больше, чем 8 opcache.interned_strings_buffer = 8 ; Сколько файлов PHP можно удерживать в памяти ; На 2ГБ сервере не получилось поставить больше, чем 4000 opcache.max_accelerated_files = 4000 ; Сколько памяти потребляет OpCache ; На 2ГБ сервере не получилось поставить больше, чем 128 opcache.memory_consumption = 128 ; Битовая маска, в которой каждый поднятый бит соответствует определённому проходу оптимизации ; Полная оптимизация opcache.optimization_level = 0xFFFFFFFF

Правим /etc/php-fpm.d/www.conf

; Уменьшаем число дочерних процессов ;pm.max_children = 50 pm.max_children = 20 ;pm.start_servers = 5 pm.start_servers = 8 ;pm.max_spare_servers = 35 pm.max_spare_servers = 10 ; Убиваем процессы через 10 секунд неактивности pm.process_idle_timeout = 10s; ; Число запросов дочернего процесса, после которого процесс будет перезапущен ; Это полезно для избежания утечек памяти pm.max_requests = 500

Запускаем:

systemctl restart php-fpm systemctl enable php-fpm

Устанавливаем Redis

Тут все просто, никаких настроек.

yum -y install redis systemctl start redis systemctl enable redis

Устанавливаем WordPress

Ну, этот раздел мы опускаем, как очевидный.

На WordPress в обязательном порядке нужно установить плагин WP Super Cache. Можно со стандартными настройками. Что делает этот плагин? При первом обращении к странице или записи WordPress, плагин создаёт файл .html с тем же именем и сохраняет его. При последующих обращениях — перехватывает работу WordPress и вместо повторной генерации страницы выдаёт готовый .html из кэша. Очевидно, это радикальным образом увеличивает скорость доступа к сайту.

Тесты

Поехали?

Собственно, да — можно начинать тестирование сайта. В качестве подопытного кролика выступал сайт, который вы читаете, и его копия по адресу test2.kagg.eu — все то же самое, только без https.

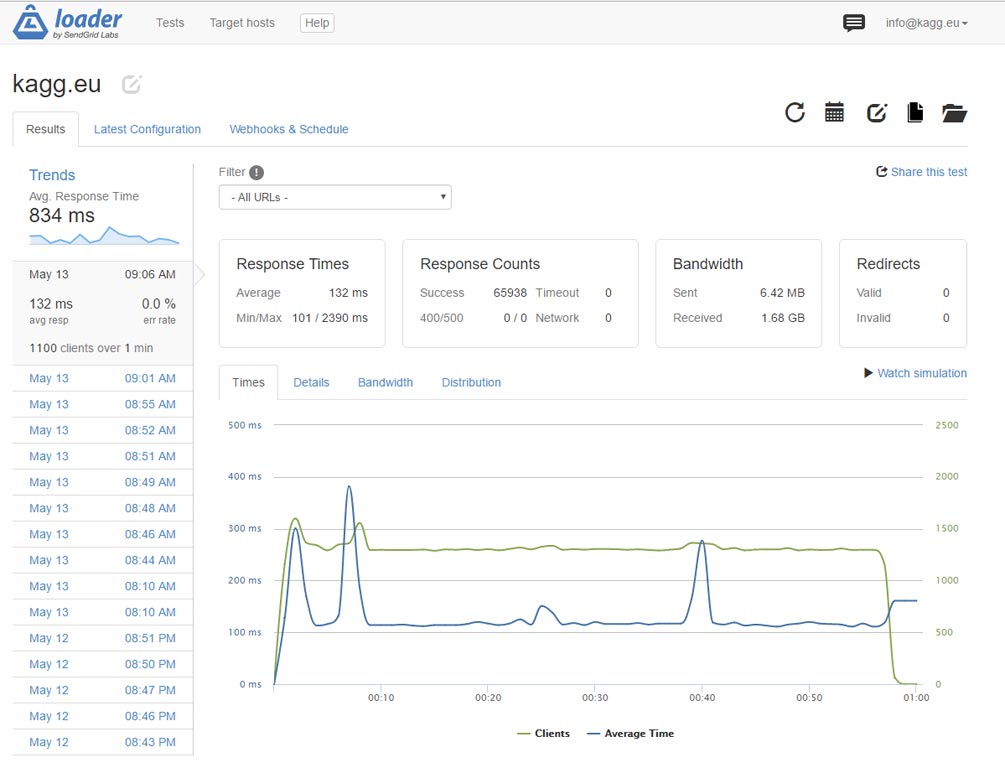

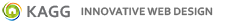

В качестве инструмента проведения нагрузочных тестов использовался loader.io. Веб-сервис предоставляет на бесплатном аккаунте возможность тестирования одного сайта со скоростью посещений до 10,000 в секунду. Но нам больше и не надо, как мы впоследствии убедимся.

Первые результаты впечатлили — без https сайт выдержал 1,000 посещений главной страницы в секунду. Размер страницы вместе с картинками — 1.6 МБ.

Микрокэширование

1,000 хитов в секунду неплохо, но мы хотим большего! Что делать? Есть в nginx такая очень полезная вещь, как микрокэширование. Это кэширование файлов и прочих ресурсов на короткий промежуток времени — от секунд до минут. Когда идут быстрые запросы, следующий пользователь получает данные из микрокэша, что существенно уменьшает время отклика.

Правим /etc/nginx/nginx.conf — вставляем одну строку перед завершающим include в коде выше:

fastcgi_cache_path /var/cache/nginx/fcgi levels=1:2 keys_zone=microcache:10m max_size=1024m inactive=1h;

Правим /etc/nginx/conf.d/servers.conf — в секцию «location ~ \.php$ {» вставляем:

fastcgi_cache microcache;

fastcgi_cache_key $scheme$host$request_uri$request_method;

fastcgi_cache_valid 200 301 302 30s;

fastcgi_cache_use_stale updating error timeout invalid_header http_500;

fastcgi_pass_header Set-Cookie;

fastcgi_pass_header Cookie;

fastcgi_ignore_headers Cache-Control Expires Set-Cookie;

Ну, само собой, рестартуем nginx.

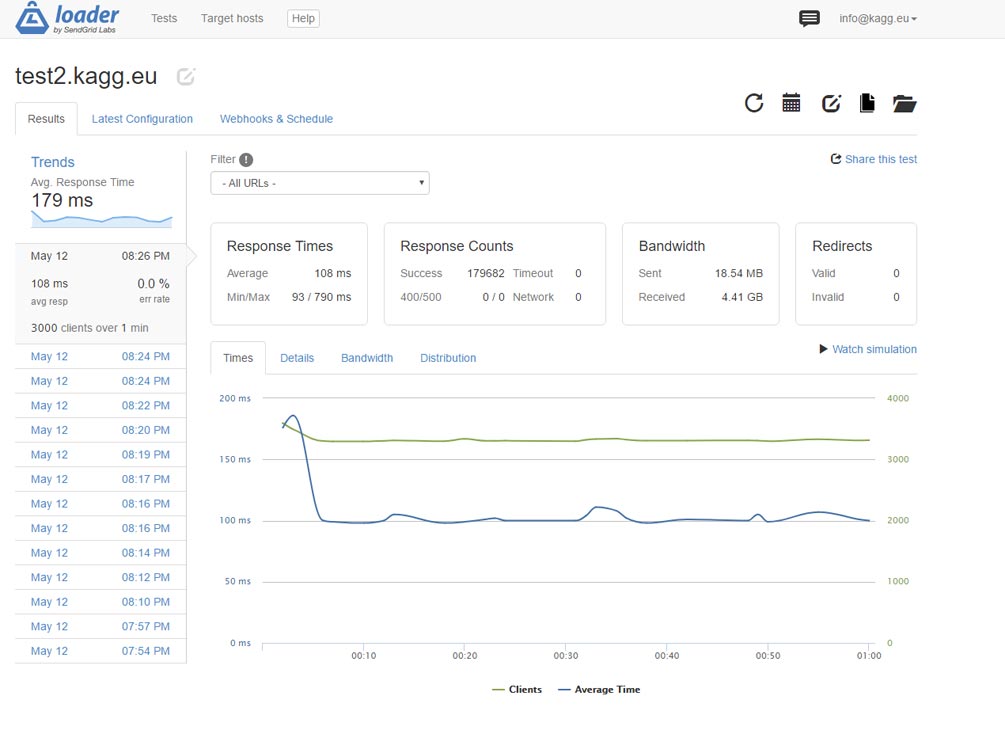

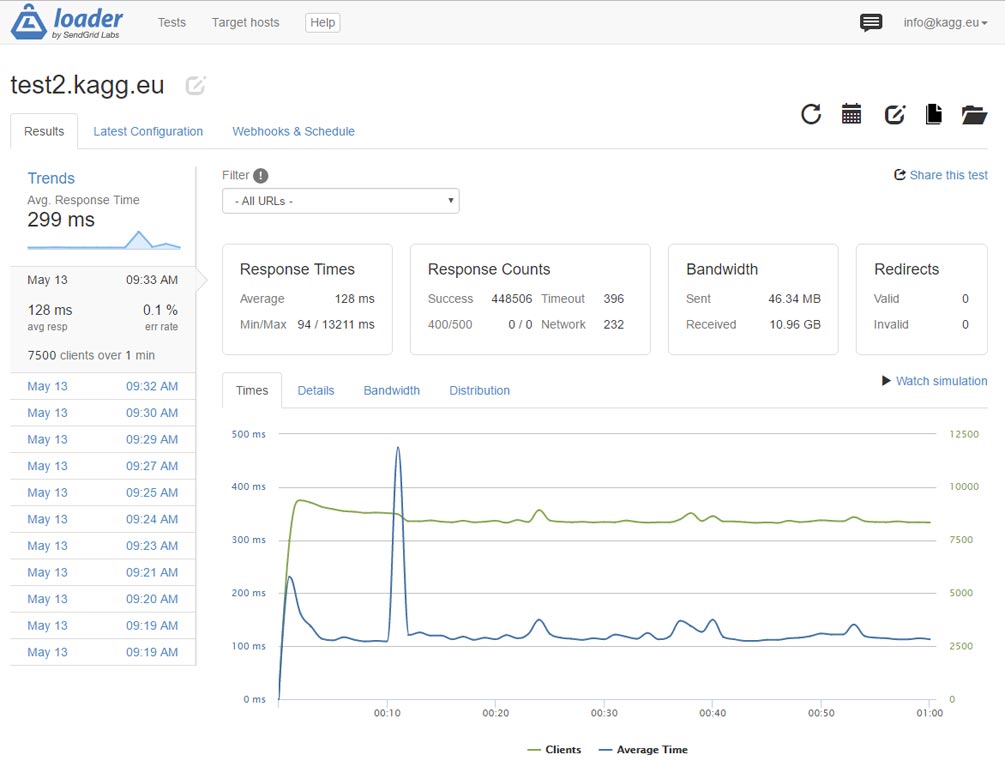

Результат впечатляет — производительность улучшилась в 3 раза. Сайт отдает по http главную страницу весом в 1.6 МБ 3,000 раз в секунду! Среднее время отклика — 108 мс.

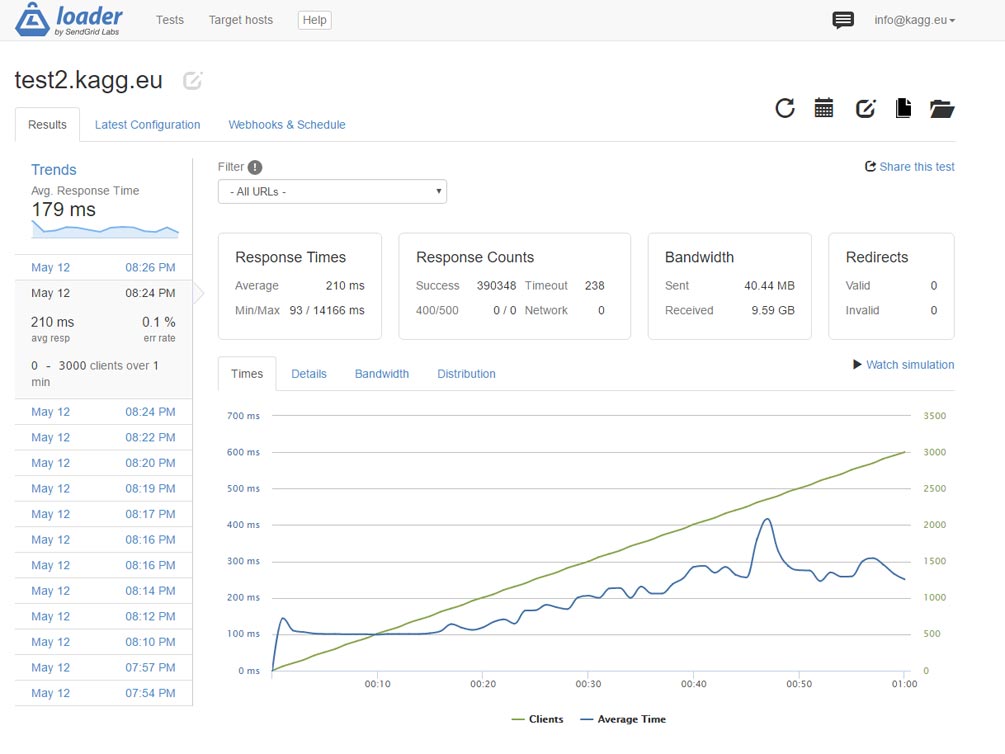

Проверяем в другой модели: линейное нарастание числа запросов от 0 до 3,000 в секунду. Работает. Среднее время отклика — 210 мс.

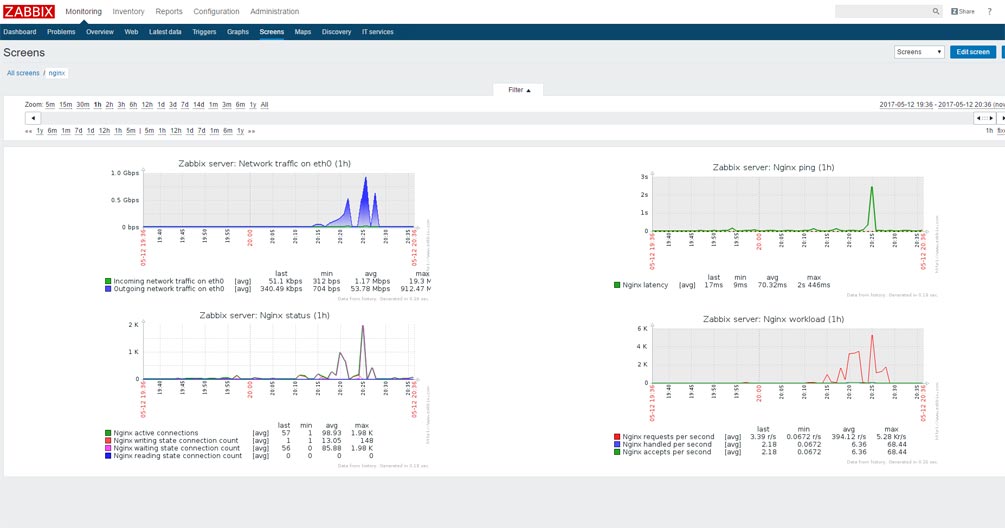

Поток данных при постоянном числе запросов 3,000 в секунду достигает 1 Гбит в секунду, даже с использованием сжатия. Что мы и видим на экране системы мониторинга Zabbix.

Zabbix, кстати, крутится на том же тестовом сервере.

А что с https?

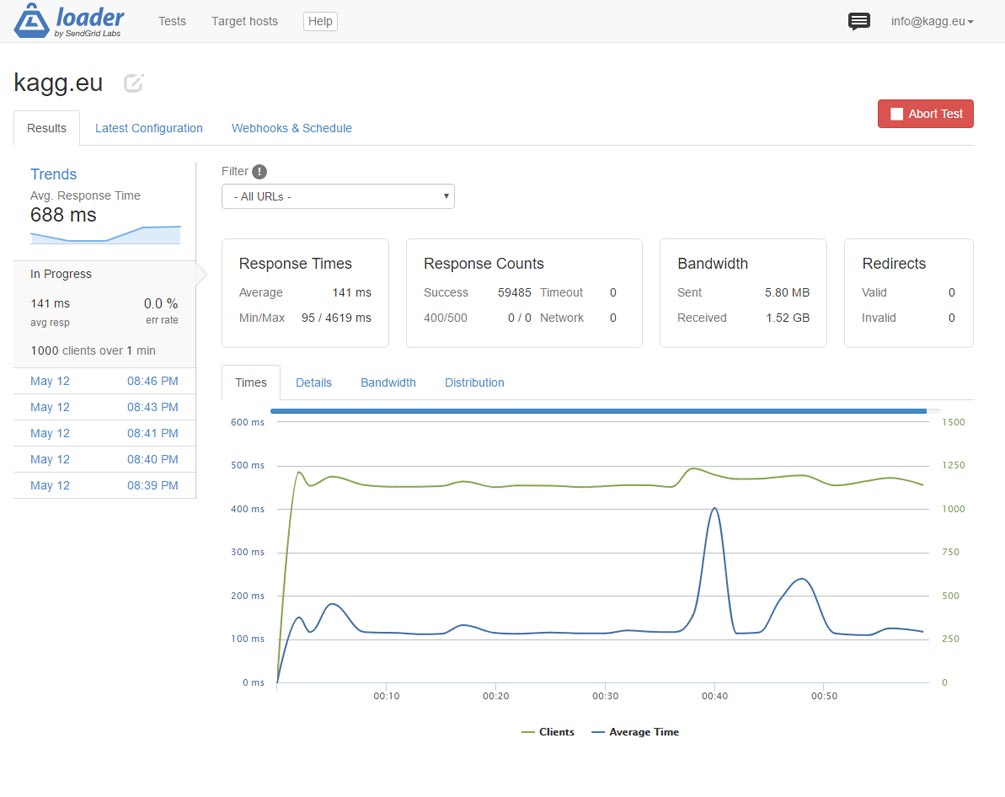

А с https, как и ожидалось, существенно медленнее. Слишком много операций по согласованию ключей, хешированию, шифрованию и т.д. Забавно наблюдать в команде top в консоли, как два процесса nginx пожирают каждый 🙂 по 99% процессора. Вот результат — 1,000 соединений в секунду:

Результаты совсем неплохие, но мы можем больше!

Rewrite в nginx

Давайте задумаемся — что сейчас происходит при обращении к странице?

- nginx принимает запрос, видит, что надо обратиться к index.php

- nginx запускает php-fpm (что не быстро совсем)

- WordPress начинает работу

- В самом начале загрузки встревает плагин WP Super Cache и подсовывает готовый .html вместо бесконечно долгой (в этих временных терминах) генерации страницы

Все это неплохо, но: процесс php-fpm стартовать надо, а потом еще какой-никакой, но php код выполнить, чтобы отдать .html.

Есть решение, как обойтись вообще без php. Делать rewrite сразу на nginx.

Снова правим /etc/nginx/conf.d/servers.conf — и после строки «index index.php;» вставляем:

include snippets/wp-supercache.conf;

Создаем папку /etc/nginx/snippets и в ней создаём файл wp-supercache.conf с таким содержимым:

# WP Super Cache rules.

set $cache true;

# POST requests and urls with a query string should always go to PHP

if ($request_method = POST) {

set $cache false;

}

if ($query_string != "") {

set $cache false;

}

# Don't cache uris containing the following segments

if ($request_uri ~* "(/wp-admin/|/xmlrpc.php|/wp-(app|cron|login|register|mail).php

|wp-.*.php|/feed/|index.php|wp-comments-popup.php

|wp-links-opml.php|wp-locations.php|sitemap(_index)?.xml

|[a-z0-9_-]+-sitemap([0-9]+)?.xml)") {

set $cache false;

}

# Don't use the cache for logged-in users or recent commenters

if ($http_cookie ~* "comment_author|wordpress_[a-f0-9]+

|wp-postpass|wordpress_logged_in") {

set $cache false;

}

# Set the cache file

set $cachefile "/wp-content/cache/supercache/$http_host${request_uri}index.html";

set $gzipcachefile "/wp-content/cache/supercache/$http_host${request_uri}index.html.gz";

if ($https ~* "on") {

set $cachefile "/wp-content/cache/supercache/$http_host${request_uri}index-https.html";

set $gzipcachefile "/wp-content/cache/supercache/$http_host${request_uri}index-https.html.gz";

}

set $exists 'not exists';

if (-f $document_root$cachefile) {

set $exists 'exists';

}

set $gzipexists 'not exists';

if (-f $document_root$gzipcachefile) {

set $gzipexists 'exists';

}

if ($cache = false) {

set $cachefile "";

set $gzipcachefile "";

}

# Add cache file debug info as header

#add_header X-HTTP-Host $http_host;

add_header X-Cache-File $cachefile;

add_header X-Cache-File-Exists $exists;

add_header X-GZip-Cache-File $gzipcachefile;

add_header X-GZip-Cache-File-Exists $gzipexists;

#add_header X-Allow $allow;

#add_header X-HTTP-X-Forwarded-For $http_x_forwarded_for;

#add_header X-Real-IP $real_ip;

# Try in the following order: (1) gzipped cachefile, (2) cachefile, (3) normal url, (4) php

location / {

try_files @gzipcachefile @cachefile $uri $uri/ /index.php?$args;

}

# Set expiration for gzipcachefile

location @gzipcachefile {

expires 43200;

add_header Cache-Control "max-age=43200, public";

try_files $gzipcachefile =404;

}

# Set expiration for cachefile

location @cachefile {

expires 43200;

add_header Cache-Control "max-age=43200, public";

try_files $cachefile =404;

}

Выполняя эти инструкции, nginx сам смотрит, есть ли .html (или сжатый .html.gz) в папках плагина WP Super Cache и если есть, берет его, не запуская никакой php-fpm.

Перезапускаем nginx, стартуем тест, смотрим в консоли в top. И, о чудо! — ни одного процесса php-fpm не стартует, а раньше их запускалось пара десятков. Работает один nginx, двумя своими рабочими процессами.

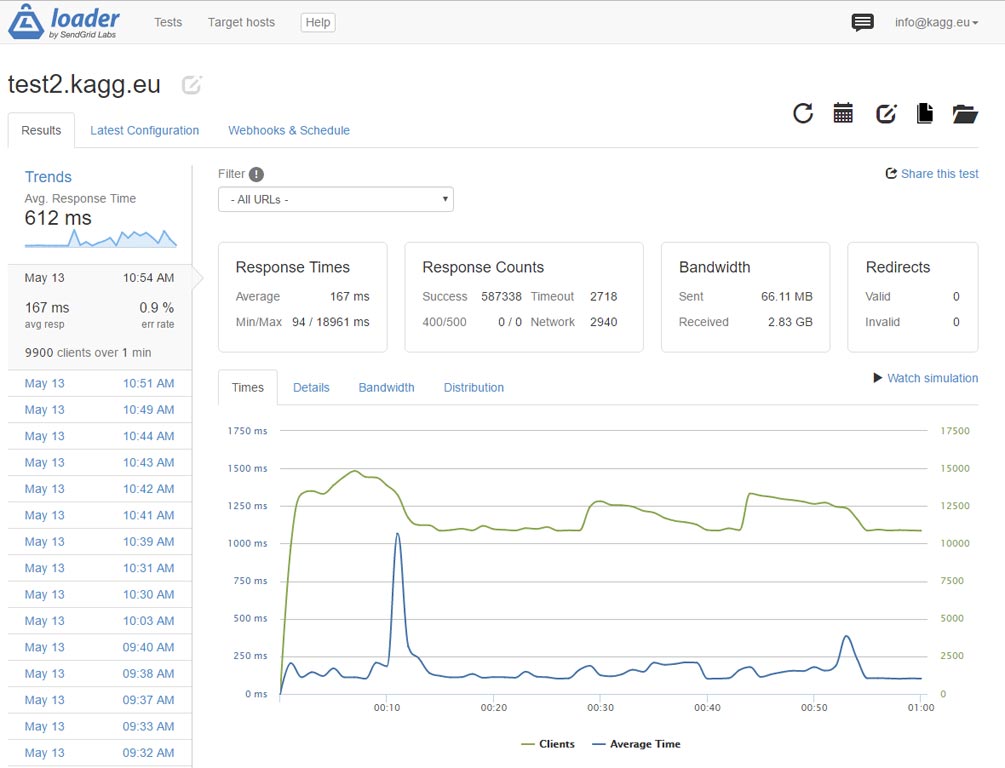

Что имеем в результате? Вместо 3,000 раз, сервер способен отдавать главную сайта без https 7,500 раз в секунду! Напомню, страница весит 1.6 МБ. Поток данных — 2 Гигабит в секунду, и, похоже, мы упёрлись в ограничение DigitalOcean по полосе пропускания.

А что, если протестировать скорость отдачи небольшой страницы, скажем, /contacts на этом сайте?

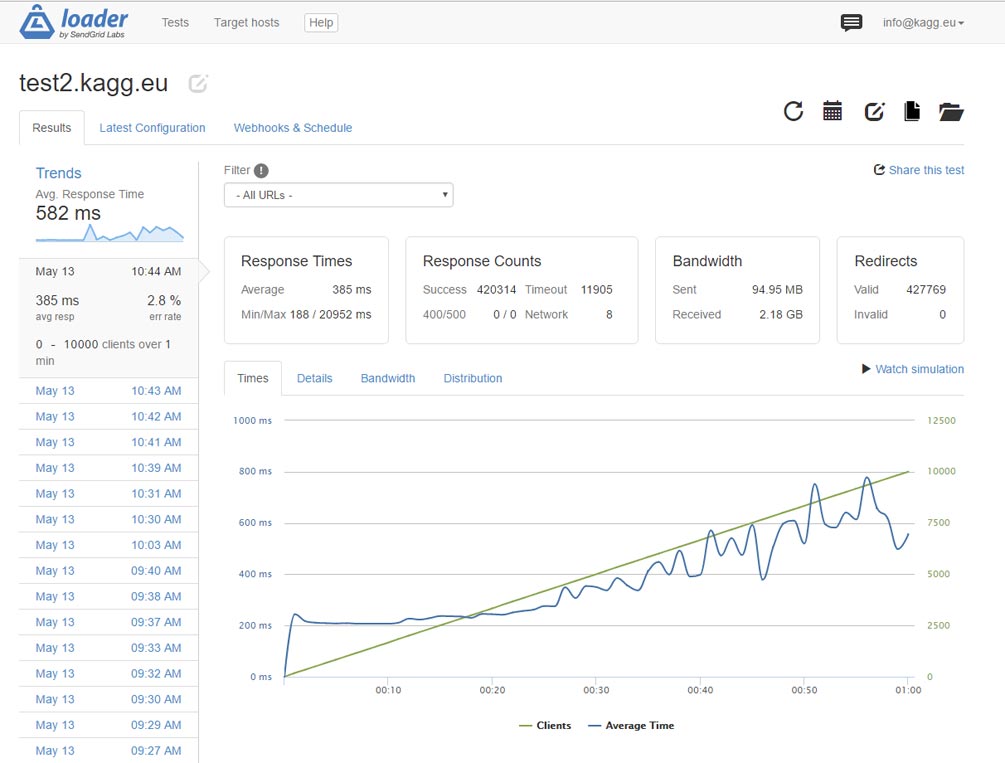

И вот тут мы, видимо, подошли к пределу сервера. Но получили результат из заголовка статьи: 10,000 посещений в секунду! Напомню — 10,000 посещений в секунду абсолютно реального сайта на WordPress — с установленными плагинами, красивой (и не очень лёгкой темой) и т.д.

Сценарий с возрастанием нагрузки тоже работает.

Тест в данной конфигурации с https показал незначительное увеличение производительности — до 1,100 посещений в секунду. Весь процессор, похоже, потрачен на шифрование…

Резюме

Мы показали, что вполне стандартный сайт на WordPress, при правильной конфигурации сервера и организации кэширования, способен отдавать не менее 10,000 страниц в секунду при работе через http. В течение суток такой сайт выдержит запредельные 800 миллионов посещений.

При включении шифрования тот же сайт может отдавать не менее 1,100 страниц в секунду.

Применённые для достижения результата средства и методики:

- Виртуальный сервер (VPS) на DigitalOcean: 2 ГБ памяти, 2 процессора, 40 ГБ SSD, 20 USD в месяц

- CentOS 7.3 64-bit

- Последние версии nginx, php7, mysql

- Плагин WP Super Cache

- Микрокэширование в nginx

- Полное исключение запуска php для показа кэшированных страниц с помощью rewrite в nginx

И никакого Varnish! 🙂

В MariaDB параметр innodb_buffer_pool_chunk_size доступен начиная с версии 10.2

Например репозитории Debian 9 содержат MariaDB 10.1, нужно подключать репы.

В этой статье не рассматривается MariaDB.

Да, но с ней тоже всё прекрасно работает. Написал для справки. )

Отличная статья, кратко и по делу. Применимо почти к любому дистрибутиву, с не большими нюансами. Всё заводится с пол пинка.

Спасибо.

Добрый день. Спасибо за информативную статью. Попытался, как в статье и советуется, переделать настройки для 1Гб оперативной памяти, но как результат свободно менее 25%. Что, в основном, может занимать память — сам nginx или же mysql? До установки PHP пока не дошел.

Спасибо за отзыв. Память, скорее всего, занимает mysql. Используйте команду top — там всё видно.

Микрокеширование с этими параметрами кладет nginx и не запускается.

fastcgi_cache microcache;

fastcgi_cache_key $scheme$host$request_uri$request_method;

fastcgi_cache_valid 200 301 302 30s;

fastcgi_cache_use_stale updating error timeout invalid_header http_500;

fastcgi_pass_header Set-Cookie;

fastcgi_pass_header Cookie;

fastcgi_ignore_headers Cache-Control Expires Set-Cookie;

Если nginx не запускается, следует проверить синтаксис командой

nginx -t. Приведённые в статье настройки полностью соответствуют настойкам nginx на этом сайте. За одним исключением: выключено микрокеширование ( нет строкиfastcgi_cache microcache).Привет, а кажите какое у вас значение в /etc/php-fpm.d/www.conf

pm = ?

dynamic, ststic или ondemand?

Добрый день.

pm = dynamic